Intro:

Technology in not a matter to be satisfied with its present success. Once upon a time, Computer Programming was totally meant by binary machine code writing. And Computer Programmers ware someone very magical person who can control a big machine with his/her codes. But now it'll be seemed to be fairy tales to modern programmers. Just think about a scenario where Microsofts chief programmer is writing Windows 8 Operating System program with machine code and after each code-word writing, asking his assistant for next binary code.

Hopefully, present scenario is not like that. We invented high level programming languages that can replace human natural language in computer. Just syntax and semantics. We invented mouse for faster user interface. Have another look at the word "User Interface". I'm writing this article for this word.

User Interface:

Lets have a discuss about "User Interface". User interface in something by which a user interacts with computer [perspective computer]. First user interface was Keyboard. Think about Unix shell/bash/terminal or command prompt whatever you want to tell that thing. That is Keyboard interface. But it's not suitable for us as it's very hard to memorize all that commands. We need something that we can visualize. Here comes Mouse interface. We love Mouse for what we are doing also we are viewing. But human wants more. Human do not want to be confined in something like wire. So, we invented wireless mouse, keyboard. But human wants even more. So think, think, think about alternative solution to user interface. "How we can use these mouse/keyboard with my house robot?" Million dollar question. It's not far away when we all (hopefully!) have a personal robot just like today's personal computer [thanks Steve Jobs]. Shall we use a wireless keyboard or mouse to control them? The answer is NO. We must have to find another solution.

Now lets think about our sensing type

- Vision

- Audition

- Smell: won't work with interface

- Test: this too

- Touch: this may work

Audition:

This may be our next user interface. We'll speak and machine will work. That would be very fun. Actually this interface is of great use in Human Computer Interface (HCI) or Human Robot Interface (HRI). This is very cheap too. We need only a microphone to make hear our voice to the machine. Of course the machine should be able to understand what we are saying to them. This is the biggest part. But main problem of this system that background noise can reduce this performance greatly. And we live in a noisy environment.

Vision:

I love computer vision, because it fun to play with it. So my words may be doped with partiality. It needs only a single camera [may be the cheapest webcam] to understand 2D scene and another camera should be added to understand 3D scene. Also scattering background is a problem but there are enough options to play with.

Hand Gesture Recognition:

Enough background saying. Lets dive into our Computer Vision. With computer vision, we can do a great bunch of work. Like identifying human with it's face, monitoring facial expression of a human, surveillance system etc. But what I'm interested in is User Interface. For that reason, gesture is of ultimate interest. And also hand gesture recognition can be a very effective user interface. We can control our computer or robot with hand gesture recognition techniques no matter what is noise level of that workplace is.

Procedure:

Now we will look into the procedure of hand gesture recognition technique. This process is not written in standard style. I'm just writing what steps I was needed to achieve my result [as long I've done].

1. Get Image:

This is done using video interface that usage built-in webcam to take frame of every moment.

Fig 1: Input Image

2. Skin Color Segmentation:

Image segmentation is the key point of you recognition system. There are several types of segmentation process like Color Based Segmentation, Shape based segmentation, shape based segmentation etc. I've used Color based segmentation. More specifically skin color segmentation. Because hand region has a stable color range for every people that do not differ hard. A notable thing is that, segmentation process should not be in BGR color space. Because BGR color space differs hardly with light intensiveness. You can use HSV or YCrCb color space. I've used YCrCb color space.

Fig 2: Skin Color segmented image

3. Noise Reduction:

Look at the figure 2. You can see some noise on the image. Some black dots over hand region. To reduce this noise, we should smooth our image. I've used Gaussian Blur function to smooth the image.

Fig 3: Noise Reduction

4. Finding Connected Component:

Now our task is to shorten our search domain. For this reason, we will try to find connected components. And we will assume that the biggest connected component will be our hand image.

Fig 4: Finding Biggest Connected Component

Fig 5: Selecting Component region.

Now, we can see that our search domain is minimized to only a line of points instead of a image full of points.

5. Finding Finger Tips:

Now we'll try to locate finger tips in our component image. For this purpose, I'm proposing 2 methods.

1.Convex Hull Method,

2.Pick Valley Method

In convex hull method, the component is passed to find convex hull points. It would be better not to bother with text but give visual result of that.

Fig 6: Component Region Selected

Fig 7: Selected region is passed to convex hull finder

Fig 8: Hull points are minimized and possible finger tips are selected.

Pick Valley Method: Also, no bothering with text...

Fig 9: Picks and Valleys are selected

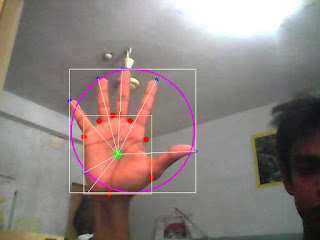

Fig 10: Relation of picks with palm center

Fig 11: Distances between palm center and finger tips.

From the last image we easily can say that finger tips are located apparently uni-distance.

Disclaimer:

The aim of this article is not to give you an idea about Computer Vision or User Interface. It's just sharing my scattering thoughts in a semi-organized way. If you learn something or give birth some idea from this article, that will be a total co-incidence. I'm not responsible for that learning or idea.

Thanks all for patient reading(!).

Result on YouTube:

ReplyDelete1. Hand Position Estimation using OpenCV

http://www.youtube.com/watch?v=0KfYsXDrXdw

2. Finding Finger Tips from detected hand region using OpenCV

http://www.youtube.com/watch?v=y_7pM4kodHQ